Google is infusing AI everywhere as possible, starting from operating systems, in-vehicle interfaces, and apps to home devices. In his thoughtful insights of advancements in Artificial Intelligence (AI), CEO Sundar Pichai said that Google is moving beyond its core mission of “organizing the world’s information,” on Google I/O 2019 keynote conference held on May 7, 2019 (Tuesday). AI is opening new doors across all of its platforms, products and services which may bring value to many of Google’s products and help Google to build up its sources of revenue beyond ad sales.

Top Artificial Intelligence Announcements Made By Google In 2019

Artificial Intelligence Announcements from the Google I/O 2019 keynote: Google I/O 2019 keynote conference showcased how ML (machine learning) is enabling new features in Search. For instance, in order to organize news results in a search, Google is bringing its “Full Coverage” News feature in the search section which will help identify different types of stories and explains how the story is being covered.

Google revealed the winners from 12 nations some six months after launching its USD 25 million AI Impact Challenge. The winners will use a Google grant of up to USD 2 million each to apply ML to fight the world’s biggest challenges.

The AI camera tool that debuted last year’s Google I/O 2018 keynote is working with partners like retailers and museums as Google said. There will be an expansion of capabilities of the lens of the AI camera that will help the user to connect things in the physical world with digital information.

With a nearly two hour presentation, Google showcased its artificial intelligence developments with upcoming features like Duplex on the web and GoogleNest Hub Max which is an assistant powered video display device.

Google Duplex

Duplex is an AI system that understands natural language and can easily hold conversations just like a human, for instance making a dinner reservation at a real restaurant, and if not reserved, it will also state the reason. And now Google Duplex is coming to the web. Watch the video below to know more:

Google Nest Hub Max is a combination of the Google Home Hub, Nest camera and GoogleHome Max offering a security camera. To know more: Click here

Google gave ML Kit a bunch of new features like AutoML Vision Edge, object detection and tracking which helps developers add artificial intelligence to their mobile apps via Firebase.

The company in the conference revealed that Google Assistant will be soon 10 times faster on the AI front with on-device machine learning.

Google always gave importance to how AI benefits can be used for the betterment of society. For this, the company revealed three accessibility projects to help people with disabilities. The projects are:

- Project Diva: help people give commands to Google assistant without using their voice.

- Live Relay: help the people who facing hearing challenges by bringing live captions to videos. This video will also be featured in phone calls that are adding a live transcription of the current conversation to bring clarity.

- Project Euphonia: Assist people with speech impairments.

Google’s AI Hardware Tools: Google Coral

On November 2019, Google made headlines with the launch of new AI hardware tools named under the brand name “Coral” which can be used by startups in their initial stages and even large business houses. Coral is an Artificial Intelligence (AI) platform to build products with local AI. Popular organizations like Asus have used this platform for the development of AI including solutions for various on-device intelligence.

It brings down inference time to 2 ms as Artificial Intelligence is accelerated locally by Google Coral. Coral will work with other Google tools like Google Cloud IoT (Internet of Things) and TensorFlow for connected device administration.

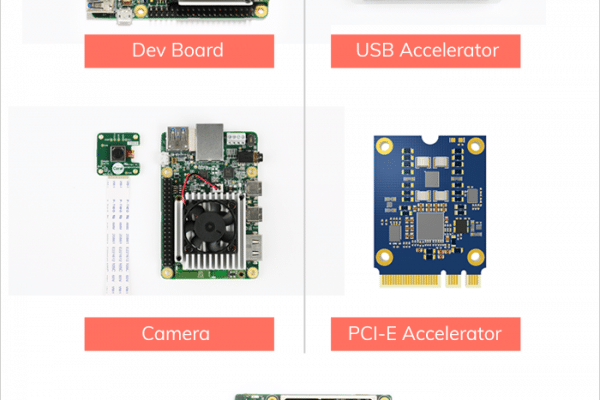

5 Products Offered By GoogleCoral

Picture Source: MobileAppDaily

- Coral Dev Board is a single board computer (costs USD 149.99) that features Wi-Fi, Edge TPU, Bluetooth, eMMC, and RAM that accelerate AI edge computing.

- USB Accelerator (costs USD 74.99) features Edge TPU that brings machine learning into existing systems and is capable of performing 4 trillion operations (tera-operations) per second.

- Compatible camera module (costs USD 24.99) of 5 megapixels.

- PCI-E Accelerator

- System-on-Module (SOM) is a board-level circuit that integrates digital and analogue functions on a single board. It includes Edge TPU, CPU, GPU, Bluetooth, Wi-Fi and secure element in a 40mm x 48mm pluggable module.

Artificial Intelligence Announcements at Google Cloud Next 2019 Conference

At the Google Cloud Next 2019 conference held in between April 9 to April 11, 2019, in San Francisco, Google launched AI Platform for data scientists and developers to build, test and deploy machine learning (ML) models.

Google announced AI Hub which is referred to as “the one-stop for everything AI” where you can also easily deploy unique Google AI technologies and Google Cloud AI for experimentation and ultimately production on GoogleCloud and infrastructures.

Currently, AI Hub is in beta format and public resources on the AI Hub can be found on the website: Click here. At the left-hand side, you will see categories like Kubeflow pipeline, Notebook, Service, TensorFlow module, VM image, Trained model, and Technical guide. AI Hub has an image, text, audio, video and others as an input data type.

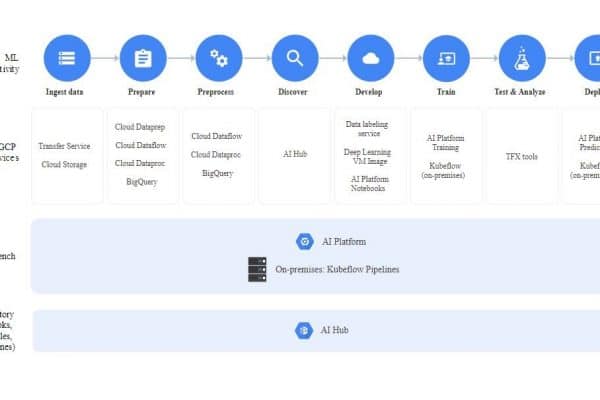

Google’s AI Platform Ecosystem: The following is the graphic image released by Google showing how AI Platform can now support the entire development lifecycle for developers’ ML (Machine Learning) projects:

Google’s Cloud AutoML was the big announcement from Cloud Next 2019 which will help developers to train high-quality models with limited machine learning expertise according to business requirements. Google Cloud AutoML consists of five products:

- AutoML Natural Language for natural language processing, including sentiment and entry analysis and content classification

- AutoML Tables for applying machine learning to structured data at increased speed and scale.

- AutoML Translation for translating languages dynamically

- AutoML Video Intelligence for searching and interacting with video content

- AutoML Vision for image processing on the Googlecloud or edge

Want to learn more about Cloud AutoML: Click here

At the GoogleCloud Next 2019, Google also announced Document Understanding AI, which can help a company to improve decision-making by using machine learning to classify, extract, and enrich data from scanned and digital documents with increase processing speed.

Contact Center AI has been launched in beta which was announced last year at the GoogleCloud Next ’18 which help customers get answers quickly of all variations with Google’s natural language understanding.

Here is the complete list to know all 29 AI announcements from GoogleCloud Next 2019: Click here